Computer Vision in Manufacturing: The Use Cases Are Obvious. The Implementation Is Broken.

Computer vision in manufacturing is proven. But most deployments cost six figures and take months — pricing out the majority who need it most. This post maps real use cases, real costs, and what a different approach looks like with small, task-specific models.

Manufacturing has one of the highest concentrations of computer vision opportunity of any industry on earth. Every production line, every assembly station, every finished product moving toward shipping is a source of visual data that could be analyzed, monitored, and acted on in real time.

The use cases aren't speculative. They're proven. Volvo is scanning every assembled vehicle for surface defects at end of line. Bosch built a full traceability system for diesel injector nozzles with OCR, barcode verification, and robot positioning — in one month. Electronics manufacturers are replacing manual PCB inspection with automated visual systems that catch what human eyes miss after an eight-hour shift.

But here's what those case studies don't say out loud: most of them took months and six-figure budgets to deploy. And they were built by organizations with dedicated engineering teams, established ML infrastructure, and the runway to absorb a complex, uncertain implementation.

For the other 99% of manufacturers — the ones without a team of CV engineers, without a $500K innovation budget, without months to spend before seeing a result — the gap between "we know CV would help" and "we have CV running in production" remains enormous.

This post is about what computer vision in manufacturing actually looks like, what it actually costs, why implementations fail, and what a different approach looks like.

What Manufacturers Are Actually Using CV For

The applications aren't concentrated in one corner of the factory. Computer vision has found its way into nearly every stage of modern manufacturing operations.

🔍 Visual Quality Inspection

The most mature and widely deployed use case. CV systems detect scratches, dents, misalignments, missing components, cosmetic defects, and dimensional anomalies directly on the production line — at speeds and consistency levels that manual inspection cannot match.

Volvo Cars' deployment with UVeye at their Torslanda plant is a flagship example: multi-camera 360-degree scans of every assembled vehicle at end of line, catching surface defects that would previously have required manual walkarounds. The key driver isn't just accuracy — it's consistency. A CV system doesn't get tired at hour seven of a shift.

🏷️ OCR, Code Verification, and Traceability

Reading engraved marks, printed text, barcodes, and serial numbers to ensure components are correctly routed, packages are correctly labeled, and products are traceable through the supply chain. Bosch's Curitiba facility uses a three-camera setup to read nozzle codes and packaging labels for diesel injector traceability — a system that combined three previously separate inspection processes into one, reducing both complexity and cost.

⚙️ Assembly Verification

Confirming that the correct component was used, that it was oriented correctly, and that each assembly step was completed before the product moves downstream. A missed step caught by vision at station three costs nothing. The same miss caught after final assembly can cost everything.

🤖 Robot Guidance and Machine Tending

2D and 3D vision systems for bin picking, part localization, robot alignment, and machine loading. As robotics adoption accelerates in manufacturing, vision guidance is increasingly the layer that makes robots flexible enough to handle real production variability rather than perfectly staged laboratory conditions.

🦺 Safety and Compliance Monitoring

Detecting PPE violations, unsafe movements, and restricted-area entry from existing camera infrastructure. Companies like Protex AI are turning standard CCTV networks into active safety monitoring systems — no new hardware required, just the intelligence layer on top of what's already installed.

📊 Process Monitoring and Predictive Maintenance

Watching production conditions continuously to catch drift, jams, and early signs of equipment degradation before they become failures. A German manufacturer working with Eviden deployed edge computer vision across assembly lines covering diverse product types — motors, PCBs, generators — significantly reducing quality inspection time.

📦 Packaging and Label Inspection

Verifying that labels, barcodes, seals, and bundled products match expected output before they leave the facility. A mislabeled shipment discovered at the customer's warehouse is orders of magnitude more expensive than one caught at the line.

What It Actually Costs — And Why

Public enterprise case studies almost never disclose total project budgets. What we do know comes primarily from system integrators and implementation vendors, and the numbers are sobering.

A basic AI vision software project runs around $30,000 with a 6–10 week development timeline. Medium-complexity systems reach $55,000 over 12–16 weeks. Advanced systems requiring high accuracy and MES/ERP integration exceed $90,000 and can take 20–30 weeks just for development.

Pilot deployments typically run $50,000–$100,000 for the first three to five months. Enterprise rollouts across multiple lines can push total investment to $150,000–$500,000 or more.

Bosch's traceability system — a fairly sophisticated deployment with OCR, robot positioning, and PLC communication — was built in about one month. That's the optimistic anchor for a well-scoped, narrow project. Most manufacturers aren't starting with that level of organizational readiness.

The honest cost breakdown tells you why:

- The model itself is rarely the expensive part. Model training is increasingly fast and accessible.

- Data annotation is expensive and time-consuming — and in manufacturing, high-quality defect examples are often rare by definition.

- Hardware setup — camera positioning, lighting, triggering, fixture design — can make or break the system before the algorithm is even involved.

- Integration with PLCs, MES, ERP systems, and legacy databases is often where projects go significantly over budget and over timeline.

- Change management — retraining quality staff, managing false positive rates, building operator trust in the system — takes longer than most project plans account for.

Why Implementations Fail

The most important insight from studying manufacturing CV deployments at scale is this: the projects that fail rarely fail because the model wasn't accurate enough.

They fail for operational reasons.

False positives erode trust faster than false negatives. An inspector who sees the CV system flag a good part as defective three times in a shift will start ignoring the alerts. Once that trust is gone, recovering it is harder than the original implementation. Getting false positive rates low enough to maintain operator confidence is often the hardest technical challenge in manufacturing CV — not raw recall.

Data variability is underestimated. Defect examples are rare by design — a well-run factory produces mostly good parts. Lighting shifts between shifts, materials change between supplier batches, products get updated. A model that worked last quarter can drift as the world around it changes, with no obvious signal that something is wrong until defects start escaping.

Legacy integration absorbs budget. PLC communication, MES interfaces, ERP connectors, database schemas from fifteen years ago — this integration layer can represent the majority of total project cost and is rarely visible in the upfront estimate.

Pilots don't automatically scale. A system that works perfectly on one line often needs significant recalibration, retraining, and process adjustment when deployed on a second line with slightly different equipment, lighting, or products. Each new environment exposes edge cases the original training data never saw.

Workforce resistance is real. Quality inspectors who have done their job well for years don't automatically trust a camera to replace their judgment. Unless the rollout is framed as augmentation — retraining them into system operators rather than replacing them — adoption struggles.

A Different Approach

The pattern across successful manufacturing CV deployments is surprisingly consistent, and it points to a different way of thinking about the problem.

Start with one bottleneck, not a transformation

Every successful rollout in the case studies above started narrow. Bosch started with nozzle traceability. Volvo started with end-of-line surface inspection. The German Eviden deployment started on a specific assembly line before expanding across product categories.

This isn't timidity — it's the correct strategy. A narrow, well-scoped pilot generates real production data, exposes real edge cases, and builds organizational confidence before you commit to a plant-wide program. It also means you see ROI before you've spent $500K.

Every vfrog enterprise engagement starts with a proof of concept on a single, high-value bottleneck. The goal is to prove the model works on your data, in your environment, against your specific problem — before anything scales. Our pilots start at $500. Not $50,000.

Fix optics and process flow before touching the model

In manufacturing environments, lighting, camera positioning, triggering, and fixture design are often the difference between a system that works and one that doesn't — regardless of what model is running behind it. A poorly lit image of a defect is a poorly lit image. No model fixes that upstream.

Our team works alongside customers on the physical setup — camera angles, lighting conditions, image quality standards — because we've learned from experience that getting this right at the start is faster than debugging model performance caused by hardware issues later.

Reduce integration friction with dedicated support

Integration with PLCs, MES, ERP, and legacy factory infrastructure is where projects consistently go over budget. It's also where the most institutional knowledge lives — in the specific quirks of a twenty-year-old SCADA system that nobody has fully documented.

For enterprise deployments that require it, our forward deployed engineers (FDE) support covers integration work directly — so the CV implementation doesn't stall waiting for a separate IT project to unblock it.

Small, task-specific models produce fewer false positives

This is where vfrog's core architecture pays off in manufacturing specifically.

A generic large vision model trained on millions of object categories carries the cost of all that knowledge — including all the ways it can be wrong about your specific product. When you ask it to inspect a precision component for a defect type it has seen only a handful of times in its training data, it compensates with uncertainty. That uncertainty shows up as false positives.

A model trained specifically on your product, your defect classes, and your production environment allocates all of its capacity to the problem you actually have. The result is higher precision on the specific task — which means fewer false alerts, less operator fatigue, and faster adoption. Lower operating costs and lower latency on edge hardware are additional benefits of running a model that isn't carrying irrelevant parameters.

Synthetic data solves the rare defect problem

The fundamental data challenge in manufacturing CV is that defect examples are rare. A well-run operation produces mostly good parts. By definition, your training dataset is imbalanced — you have thousands of good examples and dozens of defect examples, and the defects are exactly what the model needs to learn.

Our synthetic data pipeline converts 2D product images into photorealistic 3D renders placed in real production environments. For manufacturing, this means we can generate realistic defect examples — scratches at different angles, dents under different lighting conditions, misalignments at varying severity levels — without waiting for the production line to produce enough real examples to train on. Edge cases that would take months to collect naturally can be synthesized in hours.

Drift is a managed process, not a surprise failure

Every manufacturing CV system drifts over time. Materials change. Lighting shifts. New product variants arrive. Suppliers update their components. The model that was 95% accurate in Q1 may be 88% accurate by Q3 — and the decline is invisible until defects start escaping.

The right response isn't periodic manual retraining. It's a pipeline that continuously collects edge cases from production inference, surfaces them for human review, and incorporates them back into the training data. As you operate, the model improves. New edge cases encountered in production become training examples for the next iteration. Drift becomes a managed, incremental process rather than a silent failure mode.

The Opportunity Nobody Is Capturing

The manufacturing deployments that make industry press — Volvo, Bosch, large German industrials — represent a tiny fraction of the facilities where CV would deliver clear ROI. They're visible because they had the resources, the teams, and the tolerance for a long, expensive implementation process.

The mid-market manufacturer with one high-defect product line. The food and beverage producer who wants packaging verification but not a six-month project. The automotive supplier who knows their manual inspection process is the bottleneck but can't justify $200K to fix it.

These are the 99% the current tools weren't built for. And they represent the majority of the market.

Computer vision in manufacturing doesn't have to start at $50,000 and six months. It can start with one use case, one model, one bottleneck — proven in production before anything scales.

That's what we're building at vfrog.

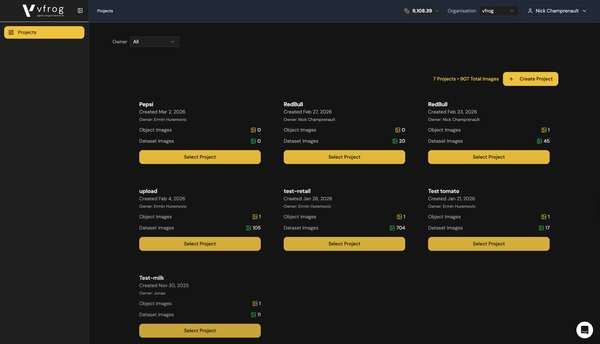

vfrog is an agentic computer vision platform that lets any developer or enterprise build and deploy task-specific small vision models — no ML expertise required. Enterprise pilots start at $600. Self-serve platform access starts at $49/month.