Smartglasses Are Here. Who's Going to Build the Applications?

MWC 2026 just confirmed what the industry has been building toward for years: smartglasses are no longer a gimmick.

Alibaba unveiled the Qwen AI Glasses — balanced, comfortable, and surprisingly normal-looking for a device with a screen embedded in the lens. Google demoed Android XR prototype glasses to packed crowds in Barcelona. Meta is producing Ray-Ban Display glasses faster than it can ship them internationally. Samsung, Deutsche Telekom, and a dozen other players all showed up with hardware that, for the first time, you'd actually consider wearing in public.

The design problem is largely solved. The hardware is mature. 2026 is shaping up to be the breakout year analysts have been predicting.

But walking away from MWC, one question dominates everything else:

Who is actually going to build anything useful on them?

The Developer Gap Nobody Is Talking About

Every major platform shift in tech history — the PC, the internet, the smartphone — was ultimately defined not by the hardware, but by the ecosystem of applications built on top of it. The App Store didn't succeed because the iPhone had a great touchscreen. It succeeded because millions of developers built things nobody had imagined.

Smartglasses are about to face the same inflection point. And there's a problem.

Smart glasses are fundamentally visual-first devices. Their entire value proposition depends on what they can see, understand, and react to in the physical world. Object recognition. Scene context. Real-time inference. Spatial awareness. Every compelling use case — whether consumer or enterprise — is built on computer vision at its core.

And 99% of developers are not computer vision experts.

The obvious answer is: just buy ready-made models from the big labs. Use Google Vision API, OpenAI's vision models, or one of the growing number of dedicated small models available off the shelf.

It sounds reasonable. But it misses three problems that compound each other in production.

Model drift. Generic models are trained on static datasets. The real world changes — new product SKUs hit retail shelves, new fish species regulations come into effect, new safety equipment standards replace old ones. A model that can't be continuously updated against your specific data will degrade in accuracy over time, silently, until it fails when it matters.

No dedicated data pipeline. Maintaining high accuracy on a specific task requires a purpose-built pipeline: domain-specific annotation, regular retraining on new examples, and a feedback loop that captures edge cases from production. Buying a model from a lab gives you a snapshot. It doesn't give you the infrastructure to keep that model accurate as your use case evolves.

Size constraints for edge deployment. Even the most capable small models from major labs are designed for cloud inference or high-end devices. Running them on smartglass hardware — with its constrained compute, limited battery, and requirement for sub-100ms inference — requires a level of optimization that off-the-shelf models simply aren't built for. The model needs to be micro-sized by design, not compressed as an afterthought.

If this gap isn't bridged, we'll get a repeat of what happened with enterprise CV over the last decade: powerful hardware, obvious use cases, and almost no adoption because the implementation cost was too high for anyone but the largest organizations to absorb.

What Smartglass Applications Actually Require

Before we explore specific applications, it's worth understanding why smartglasses create unique technical constraints that make this problem harder — and why those constraints actually favor a specific architectural approach.

Compute is constrained by design. You cannot run a 70-billion parameter foundation model on your face. Smartglass hardware — even with chips like Qualcomm's new Snapdragon Wear Elite — has a fraction of the compute available to a cloud server. Generic large models are simply not an option for on-device inference.

Latency cannot be hidden. On a phone, a 500ms API call is invisible. On glasses, a 500ms delay between what you see and what the model tells you creates a jarring, unusable experience. Real-time means real-time. Applications that depend on cloud inference for every frame will feel broken.

Connectivity is unreliable in the field. Many of the most compelling smartglass use cases — construction sites, agricultural land, remote rivers — happen exactly where 4G and 5G coverage is inconsistent or absent. Applications that depend on a stable connection to function will fail precisely when they're needed most.

Accuracy has to be trusted. On a phone, a wrong search result is annoying. On glasses, a misidentified species, a missed safety hazard, or an incorrect component identification during a repair can have real consequences. Users will only rely on glasses-based CV if the accuracy is high enough to act on.

These four constraints point to the same architectural answer: small, task-specific vision models, running on-device, trained for precision on a defined problem.

Four Examples of Use Cases Where It Matters

🏗️ Construction and Field Safety (B2B)

Construction remains one of the most dangerous industries in the world. A significant proportion of workplace fatalities happen on job sites where the hazards were visible — but nobody caught them in time.

Smartglasses change the calculus entirely. A worker walking a site with CV-enabled glasses can have every frame of their field of vision continuously analyzed for:

- Missing or non-compliant PPE (hard hats, high-visibility vests, safety gloves)

- Unauthorized personnel in restricted zones

- Structural elements that show signs of stress or misalignment

- Equipment positioned in unsafe proximity to workers

The key word is continuously. Not a periodic audit. Not a supervisor walking the floor every few hours. A persistent, real-time layer of safety intelligence that never gets tired, never gets distracted, and flags issues the moment they appear.

For this to work, latency cannot be tolerated. A worker who walks into a restricted zone needs a warning in under a second — not after a round-trip to a cloud server. The model needs to run on-device, be trained specifically on the relevant hazard classes for that site, and deliver accuracy high enough that workers trust the alerts rather than ignoring them.

The business case is straightforward: one prevented lost-time injury typically saves between $38,000 and $150,000 in direct and indirect costs, before accounting for regulatory fines or reputational damage.

🛒 Retail Shelf Intelligence (B2B)

Retail has always been a natural home for computer vision. Out-of-stock positions cost the global retail industry an estimated $1 trillion annually. Planogram compliance — ensuring products are placed correctly according to store layout rules — is checked manually, infrequently, and inaccurately.

The traditional approach to fixing this involves fixed cameras, complex infrastructure, and expensive professional services to configure and maintain. It's why CV-based shelf intelligence has been a promise for years but rarely a deployed reality for mid-market retailers.

Smartglasses change the deployment model entirely. A store associate wearing CV-enabled glasses can generate a complete audit of shelf conditions just by walking the floor during their normal shift. The model running on the glasses detects:

- Out-of-stock positions and low inventory levels

- Products placed in incorrect locations

- Facing and alignment issues

- Promotional material compliance

No additional hardware. No infrastructure investment. The glasses become a scanning device that produces actionable shelf intelligence passively, as part of an existing workflow.

For this to work at the shelf-edge in real environments, the model needs to handle varied lighting, partial occlusion, and densely packed SKU environments with high accuracy. A model trained specifically on a retailer's product catalog — rather than a generic object detection model — will dramatically outperform general-purpose alternatives.

🔧 Assisted Maintenance and Repair (B2B)

Industrial maintenance is a knowledge problem as much as a technical one. Senior technicians carry decades of institutional knowledge about specific equipment, failure patterns, and repair procedures. As that workforce ages and retires, that knowledge walks out the door.

Smartglasses running CV applications can begin to encode and surface that knowledge at the point of need. A technician looking at an unfamiliar machine can have:

- The component identified and labeled in their field of view

- Visual wear patterns flagged before they become failures

- The correct maintenance procedure surfaced for the exact component and condition in front of them

- Step-by-step guidance overlaid on the physical object they're working on

The accuracy requirement here is acute. If the model misidentifies a component and surfaces the wrong procedure, the technician may make the problem worse. In high-stakes environments — aerospace, medical equipment, power generation — the consequences extend well beyond downtime.

This use case also illustrates why small, specialized models beat large generic ones in practice. A model trained specifically on the machinery used by a particular manufacturer or maintenance operation will achieve substantially higher accuracy on that equipment than a general-purpose vision model that has seen every object type but is a specialist in none of them.

On-device deployment also matters here because many industrial environments — factory floors, utility infrastructure, offshore facilities — have limited or zero connectivity.

🎣 Fishing: Species Identification on the Water (B2C)

With approximately 700 million recreational fishers globally, fishing represents the largest potential consumer application for smartglass CV — and it illustrates the unique requirements of edge deployment better than almost any other use case.

A fisher pulls up a catch on a remote lake. The relevant questions are immediate and consequential: Is this species protected in this region? Is this fish above the minimum size limit? Am I legally required to release it?

The answer to those questions varies by geography, species, and local regulations. Getting it wrong — keeping a protected species or an undersized fish — carries fines that can be significant. Getting it right requires accurate species identification in conditions that are rarely ideal: poor lighting, the fish partially in water, scales catching glare.

And critically, none of this happens in a location with reliable internet connectivity. Remote lakes, coastal fishing spots, and backcountry streams are exactly the places where cellular coverage disappears.

This is the perfect edge CV use case:

- No connectivity available — cloud inference is not an option

- Accuracy is legally and ethically consequential — a wrong identification has real stakes

- Latency matters — the fish is alive and needs to be returned to water quickly

- The object class is well-defined — a model trained on regional fish species doesn't need to know what a fork or a car looks like

A small vision model trained on the relevant species for a given geography, running fully on-device with high accuracy and near-zero latency, solves a problem that 700 million people face regularly.

Why Generic Models Won't Work Here

Every application above shares a common thread: they require high accuracy on a specific, well-defined visual task, in constrained hardware environments, often without connectivity.

This is precisely where large, generic vision models fail — and where small, specialized models win.

A generic model trained on millions of object categories carries the computational cost of all that knowledge, even when 99% of it is irrelevant to the task at hand. Running a model that knows what a school bus looks like on hardware that needs to identify shelf gaps is wasteful and slow. The accuracy on the specific task suffers because the model has spread its capacity across an enormous range of unrelated problems.

A model trained specifically on shelf-edge products, safety equipment categories, machine components, or regional fish species allocates all of its capacity to the problem it actually needs to solve. The result:

- Faster to train — you're not training on millions of irrelevant examples

- Smarter in processing — every parameter is working on your specific problem

- Cheaper to operate — smaller models mean smaller inference costs and lower power consumption on edge hardware

This is the architectural bet that makes smartglass CV applications viable at scale. Not bigger models pushed harder, but the right model for the right task, built to run where and when it's needed.

The Infrastructure Problem

Knowing that specialized small models are the right approach is only useful if building them is accessible to the developers who need them.

Today, it largely isn't. Creating a task-specific vision model still requires expertise that the vast majority of developers don't have: understanding of annotation quality requirements, model architecture selection, training pipeline configuration, accuracy evaluation, and edge deployment optimization. The tooling that exists was built for ML practitioners, not for the full-stack developers who will build the next generation of smartglass applications.

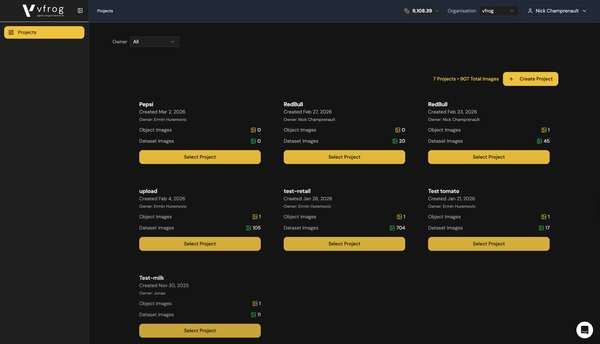

This is the gap vfrog was built to close.

Three pieces of infrastructure sit at the core of it:

An agentic framework for vision model development. Developers describe what they need to detect in plain language. The agent handles annotation, model selection, training, and deployment — encoding the expertise that would otherwise require a specialist. Part of this is already available publicly: CROAK, an open-source package available as a JavaScript package on npm and a Python package on PyPI, built specifically for the CV/ML pipeline in Claude Code environments.

Small, task-specific vision models by design. Rather than giving developers access to a large generic model and asking them to fine-tune it, vfrog's architecture produces purpose-built models for specific detection tasks. Faster to train, more accurate on the target problem, and small enough to deploy on constrained hardware.

Edge deployment with high accuracy. Every model built on vfrog is optimized for on-device inference. Minimal quantization degradation. Low latency. No dependency on connectivity. The architecture is designed from the ground up for the environments where smartglass applications actually run.

The Window Is Open

Platform shifts don't wait. The developers who built the defining applications of the smartphone era moved in the first two years — before the platforms were fully mature, before the audiences were fully formed, because that's when the creative space was widest.

The smartglass platform wars are just beginning. The OS layer is being built right now. The hardware is ready. The use cases are obvious.

The application layer is wide open.

The question is whether the developers who want to build on this platform will have the tools to do it — or whether the CV expertise barrier will keep the most compelling applications unbuilt for another decade.

That's the problem vfrog exists to solve.

vfrog is an agentic computer vision platform that lets any developer build and deploy task-specific small-vision models — no ML expertise required. CROAK, our open-source CV agent package, is available now as a JavaScript package on npm and a Python package on PyPI. Self-serve platform access starts at $49/month.

→ vfrog.ai