What is Auto-Annotation? How AI-Assisted Labeling Works

Auto-annotation is the process of using AI models to automatically generate labels (bounding boxes, polygons, segmentation masks) on images, replacing or reducing the need for manual human annotation. It is the single most impactful advancement in making computer vision projects accessible to non-specialists.

Why Annotation is the Bottleneck

Before a computer vision model can learn to detect anything — defects on a production line, products on a shelf, hard hats on a construction site — it needs labeled training data. Every object in every training image must be marked with a bounding box, polygon, or mask that tells the model "this is what you're looking for."

This labeling process is the single largest time and cost sink in any CV project. Industry benchmarks consistently show that annotation consumes 60–80% of total project time. A dataset of 1,000 images with 5 objects per image means 5,000 individual annotations. At 30 seconds per annotation (a generous estimate for complex objects), that's 42 hours of focused human labor — before a single model is trained.

For organizations without dedicated annotation teams, this bottleneck is often the reason CV projects never get past the proof-of-concept stage. The technology works. The use case is clear. But nobody has 42 hours to draw bounding boxes.

Auto-annotation changes this equation fundamentally.

How Auto-Annotation Works

Auto-annotation systems use pre-trained vision models to generate initial labels on unlabeled images. The specific approach varies by platform, but the general workflow follows a consistent pattern:

Step 1: Upload Unlabeled Images

The user uploads raw images to the platform. No prior labeling is required. The images should contain examples of the objects the user wants to detect.

Step 2: AI Model Generates Predictions

A pre-trained model (or an ensemble of models) analyzes each image and generates predicted labels. These predictions take the form of bounding boxes, polygons, or segmentation masks, depending on the task type.

The model used for auto-annotation is typically a general-purpose detector (trained on broad datasets like COCO or Open Images) or a foundation model (like Meta's SAM for segmentation). Some platforms use proprietary models fine-tuned for specific domains.

Step 3: Human Review and Correction

The AI-generated labels are presented to the user for review. This is the critical step — the human task shifts from creation (draw a box around each object) to verification (is this box correct? yes or no).

Verification is dramatically faster than creation. Studies on human annotation workflows consistently show that verifying a pre-generated label takes 2–5 seconds, while creating a label from scratch takes 15–45 seconds depending on object complexity. That's a 5–10x speedup in the annotation phase alone.

Step 4: Corrected Labels Become Training Data

After human review, the corrected annotations become the training dataset. Any labels the AI got wrong are fixed by the human reviewer. Any objects the AI missed are added manually. The result is a fully labeled dataset produced in a fraction of the time manual annotation would require.

Types of Auto-Annotation

Zero-Shot Auto-Annotation

Zero-shot approaches use models that can detect objects they've never been explicitly trained on. The user provides a text description ("detect hard hats") or an example image, and the model attempts to find matching objects across the dataset.

Foundation models like CLIP (Contrastive Language-Image Pre-training) and Grounding DINO enable this approach. The advantage is that no initial labeled data is required. The disadvantage is that accuracy on specialized domains (medical imaging, industrial inspection) can be significantly lower than on common objects.

Few-Shot Auto-Annotation

Few-shot approaches use a small number of human-labeled examples (typically 5–20 images) to guide the AI's predictions on the remaining unlabeled images. The model learns from the examples and applies that understanding to the full dataset.

This is the approach used by vfrog's SSAT (Self-Supervised Annotation Technology). Users upload images, provide a few example annotations or a natural language description, and the agent auto-labels approximately 80% of objects across the dataset. The human reviews and corrects the remaining 20%.

Active Learning

Active learning is an iterative approach where the model identifies which images it's most uncertain about and prioritizes those for human annotation. The human labels the uncertain cases, the model retrains, and the cycle repeats.

This approach is mathematically efficient (you label the images that improve the model most) but requires multiple rounds of human interaction. It's best suited for large datasets where labeling everything is impractical.

Model-Assisted Labeling

Model-assisted labeling uses a previously trained model (from a related project or a pre-trained checkpoint) to generate initial annotations. This is common in iterative workflows where a v1 model's predictions bootstrap the annotation for a v2 training dataset.

Accuracy Considerations

Auto-annotation is not a replacement for human judgment — it's an accelerant. The accuracy of auto-generated labels depends on several factors:

Domain specificity. Auto-annotation is most accurate for common objects (cars, people, animals, household items) where pre-trained models have extensive training data. Accuracy drops for specialized objects (specific PCB components, custom product SKUs, rare plant diseases) that don't appear in general training datasets.

Object complexity. Simple, well-defined objects (boxes, bottles, vehicles) are annotated more accurately than amorphous or overlapping objects (spilled liquids, tangled wires, dense foliage).

Image quality. Clear, well-lit images with distinct objects produce better auto-annotations than dark, blurry, or occluded images.

Review is non-negotiable. Even the best auto-annotation systems make mistakes. Using auto-generated labels without human review introduces label noise that degrades model performance. The speed gain comes from verification being faster than creation, not from eliminating the human entirely.

The Economics of Auto-Annotation

The financial impact of auto-annotation is straightforward to calculate:

Manual annotation cost: Professional annotation services charge $0.05–0.50 per annotation depending on complexity. A dataset of 1,000 images with 5 objects each costs $250–2,500 in labeling fees alone, plus project management overhead and turnaround time of 1–4 weeks.

In-house manual annotation: If your team labels internally, the cost is their time. At $50/hour for a developer's fully loaded cost, 42 hours of annotation costs $2,100 in opportunity cost.

Auto-annotation: If AI handles 80% of annotations and humans verify the rest, the human time drops to approximately 8 hours (verification of auto-generated labels plus manual annotation of missed objects). Cost: $400 in opportunity cost, plus platform fees.

The ROI is typically 3–5x on direct annotation costs and 5–10x on time-to-first-model.

Auto-Annotation at vfrog

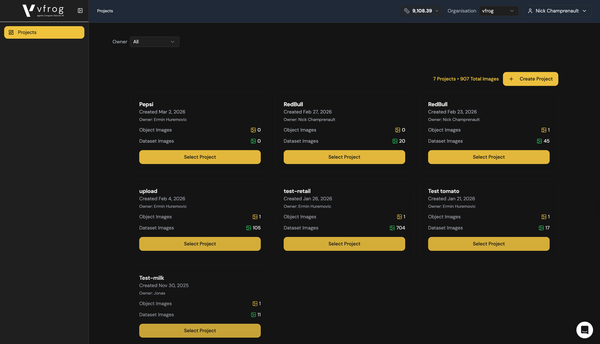

vfrog's implementation of auto-annotation through the SSAT agent follows the few-shot pattern:

- Upload images. Drag and drop your dataset into the platform.

- Describe what to detect. Tell the agent what objects you're looking for in plain English.

- Agent auto-annotates. SSAT processes the images and generates bounding boxes for detected objects. Approximately 80% of objects are labeled automatically.

- Human reviews via HALO. The Human-Assisted Labeling of Objects interface presents auto-generated labels as thumbnails. Click checkmark to accept, X to reject, or drag to adjust. A dataset of 100 images typically takes 10–15 minutes to review.

- Train. Once annotations are verified, one-click training produces a task-specific model.

The entire workflow — from uploading images to having a deployed model — can complete in under 2 hours for a typical dataset. Without auto-annotation, the same project would take days to weeks depending on dataset size.

The Future of Auto-Annotation

Auto-annotation technology is improving rapidly. Foundation models are becoming more capable at zero-shot detection. Few-shot learning is requiring fewer examples. Interactive annotation (where the user clicks a point and the model generates a full segmentation mask) is becoming standard.

The trajectory is clear: the human role in annotation is shifting from laborer to supervisor. The question isn't whether auto-annotation will replace manual labeling — it already has for many use cases. The question is how quickly the remaining accuracy gap closes for specialized domains.

For developers and teams evaluating CV platforms today, auto-annotation capability should be a primary selection criterion. The platform that reduces your annotation time by 80% gives you a model 80% sooner. In competitive markets, that speed advantage compounds.

vfrog.ai's SSAT agent auto-annotates approximately 80% of objects in your images. Try it with a 14-day free trial at vfrog.ai.