Computer Vision in Agriculture: From Field to Fork, the Use Cases Are Everywhere. The Implementation Still Isn't.

Computer vision in agriculture is proven — from drone crop scouting to livestock monitoring to post-harvest grading. But most deployments cost six figures and take seasons to validate. This post maps the real use cases, the real costs, and what a different approach looks like.

Agriculture feeds the world. It also runs on razor-thin margins, operates in harsh and unpredictable environments, and faces a labor shortage that is getting worse every year. It is, by almost any measure, one of the industries with the most to gain from computer vision.

The use cases are not speculative. IBM and Paulman Farms are using drone-captured imagery and CV models to detect disease and nutrient stress across 10,000 acres of corn in Nebraska. DeLaval's BCS system scores dairy cows for body condition every single day using overhead cameras in milking parlors — automatically, without a vet present. Optical grading lines in fruit and vegetable processing run tens of items per second through vision systems that classify size, color, surface defects, and contamination faster and more consistently than any human crew.

But here's what the case studies don't say out loud: most of these deployments took months, cost six figures, and were built by organizations with the capital and technical resources to absorb a complex, uncertain implementation. A full smart-farm or vertical farming deployment can run into seven figures before ROI materializes.

For the majority of agricultural operations — family farms, mid-size growers, livestock producers, cooperatives without dedicated technology teams — the gap between "we know CV would help" and "we have CV running in production" remains enormous.

This post maps what computer vision in agriculture actually looks like, what it costs, why implementations struggle, and what a different approach makes possible.

What Agriculture Is Actually Using CV For

Computer vision has found its way into every stage of the agricultural value chain — from the field to the processing line, from the barn to the greenhouse.

🐄 Livestock Health, Behavior, and Counting

Animal welfare is one of the most compelling and commercially validated applications of CV in agriculture. The value is direct: earlier disease detection reduces mortality, reduces veterinary intervention costs, and improves productivity. The challenge is that monitoring hundreds or thousands of animals continuously is simply beyond what any human team can do.

DeLaval's BCS system positions overhead cameras in milking parlors and automatically scores dairy cows for body condition every day — flagging welfare concerns before they become clinical problems. CattleEye operates similarly, using a camera mounted at the parlor exit to monitor each cow as it passes, claiming around $420 per cow per year in realized value from improved health and productivity.

OneCup AI's BETSY system takes a broader approach: fixed cameras near water and feeding points identify individual animals, monitor activity patterns, detect calving events, and send real-time alerts — all on a subscription model of approximately $40 per camera per month.

For swine operations, top-down camera systems using CNNs trained on image-weight pairs provide continuous weight estimation and welfare monitoring without the stress of manual weighing — a welfare improvement that also delivers better data for feed optimization.

🚁 Drone-Based Crop Monitoring and Disease Detection

From the air, computer vision gives agronomists a scale of visibility that ground-based scouting simply cannot match. Drones equipped with RGB and multispectral cameras fly fields at defined intervals throughout the growing season, capturing imagery that CV models analyze for disease presence, pest pressure, nutrient deficiency, and crop stress.

IBM's collaboration with Paulman Farms in Nebraska demonstrates the operational form of this: drones capture both aerial overview and close-up images of corn crops, and IBM's vision models detect disease and pest patterns through a mobile app that surfaces actionable maps directly to the farm team. Ten thousand acres of coverage in a fraction of the time a scouting crew would require.

The value, however, only materializes when the maps connect to decisions — variable-rate spraying, targeted irrigation, re-planting prescriptions. A drone analytics program that produces beautiful stress maps but doesn't feed into operational workflows is an expensive data collection exercise.

💧 Precision Spraying, Weeding, and Variable-Rate Inputs

One of the highest-ROI applications in agriculture CV is also one of the most technically demanding: real-time weed detection and precision spraying at field speed.

Camera systems mounted on sprayer booms analyze the ground ahead in real time — distinguishing weeds from crop rows, green-on-brown from green-on-green, and triggering individual nozzles to spray only where needed. The result: herbicide reduction of 40–60% compared to blanket application, with direct cost savings and regulatory compliance benefits in jurisdictions tightening restrictions on chemical use.

The technical requirements are unforgiving. Equipment moves at 10–20 km/h. The inference must happen at the edge — there is no connectivity in the middle of a field, and cloud latency would make the system useless at speed. The models need to generalize across weed species mixes that vary by field, season, and geography.

🍎 Yield Estimation, Fruit Counting, and Harvest Readiness

Knowing how much fruit a block will yield — before harvest — transforms operational planning. Labor can be scheduled accurately. Logistics can be arranged. Pricing negotiations can be entered with real data.

Vision systems mounted on tractors or specialized robots count fruit per tree or vine as they move through orchards, building yield estimates from direct observation rather than historical averages or manual sampling. Maturity and color assessment models add a second layer: not just how many, but when — feeding harvest scheduling decisions with per-block readiness data.

The data challenge here is significant: models need to count fruit through foliage, trellising, and the geometric complexity of orchard canopies, across lighting conditions that vary from harsh midday sun to dusk-light passes specifically chosen for imaging stability.

🌱 Sorting, Grading, and Quality Inspection Post-Harvest

At the processing stage, vision systems have replaced human graders on high-throughput lines across fruits, vegetables, and grains. Cameras positioned along conveyor belts classify each item by size, color, surface condition, and defect presence — controlling diverter gates that route items to appropriate grades or reject streams.

Foreign object detection adds a food safety layer: vision systems (often combined with X-ray) detect stones, metal fragments, or biological contamination in grain and nut processing lines before they reach packaging.

The throughput demands here are extreme — tens of items per second, with latency budgets that leave no room for cloud inference. And the defect taxonomy is defined by buyers, not engineers: what constitutes a Grade A apple versus a Grade B depends on the contractual standard, which means domain experts need to be in the loop for label definition from the start.

🏠 Barn, Greenhouse, and Field Infrastructure Monitoring

Smart barn systems monitor occupancy, animal distribution, equipment status, and environmental conditions — adjusting ventilation, feeding, and lighting automatically based on what the cameras see. When an alley is blocked, when pens are overcrowded, when unusual animal movement patterns suggest illness or injury, the system alerts.

In greenhouse and vertical farming environments, CV combined with IoT sensors is producing dramatic efficiency gains. Case studies report 40–50% water savings and 40% labor reduction when camera-based plant monitoring is integrated with automated environmental control — tracking growth rates, detecting disease early, and optimizing light and nutrient schedules across thousands of plants that no human team could monitor individually.

What It Actually Costs — And Why

Agricultural CV deployments span an enormous cost range depending on scale and complexity.

A small pilot — a few cameras on a single farm, a drone analytics program for one season — typically runs in the low-to-mid five figures including hardware and integration. Regional rollouts across multiple barns or fields reach the low-to-mid six figures. Full smart-farm or vertical farming deployments with integrated infrastructure can reach mid-six to seven figures before ROI materializes.

More specifically: livestock monitoring SaaS like CattleEye or BETSY starts with standard IP cameras at a few hundred dollars each and subscriptions around $40 per camera per month — genuinely accessible entry points. Custom livestock monitoring projects from specialist vendors run into six figures at scale. Precision spraying retrofits run mid-five to low-six figures per boom. Optical sorting lines for post-harvest grading range from low to high six figures per installation.

The cost structure in agriculture carries a challenge that manufacturing doesn't face to the same degree: seasonality. A model that needs validation in the field can only be tested during the relevant growing season. A failed pilot in October may mean waiting until May for the next iteration. Every experimental cycle is measured in months, not weeks.

Why Implementations Struggle

Agricultural CV deployments fail for reasons that are consistently operational rather than algorithmic.

Data scarcity across seasons and conditions. A model trained on last season's disease imagery may not recognize this season's strain. A livestock monitoring system trained on one breed may struggle on another. And unlike manufacturing, where defect examples accumulate continuously, agricultural anomalies are seasonal and sometimes rare by definition.

Edge requirements are non-negotiable. Fields have no connectivity. Barns have intermittent broadband at best. A system designed with a hard cloud dependency will fail precisely when it's needed — in the middle of a field at 15 km/h, or in a barn during the early morning hours when disease alerts are most valuable. Edge-first architecture isn't a nice-to-have in agriculture; it's a prerequisite.

Seasonal learning cycles slow everything down. One iteration per season is the reality for many crop applications. If the model doesn't generalize well enough in March, you may not know until harvest in September, and you won't be able to retrain on corrected data until the following year. This makes the quality of training data and the robustness of the initial model far more consequential than in environments where feedback loops are fast.

Domain expertise is essential for labeling. A stressed plant looks different to an agronomist than it does to a computer vision engineer. A cow with early-stage lameness may walk in a way that's subtle enough to require veterinary expertise to label correctly. Without that domain knowledge in the annotation process, models learn the wrong thing from the right images.

Farmer trust and UX are make-or-break. A livestock farmer who receives a flood of low-confidence alerts, or who has to switch between five different platforms to act on a recommendation, will abandon the system within weeks. Agricultural CV deployments that survive long-term are consistently the ones that surface simple, actionable alerts in the tools farmers already use — not the ones with the most sophisticated model architecture.

A Different Approach

The failure patterns above point to the same set of deployment principles that successful agricultural CV programs share — and they map directly to the architectural choices vfrog is built around.

Start with one bottleneck on one farm

Every successful agricultural CV deployment in the case studies above started narrow. CattleEye started at the parlor exit. IBM and Paulman Farms started on a defined corn disease detection task. The sorting line vendors start with one fruit variety and a limited defect taxonomy.

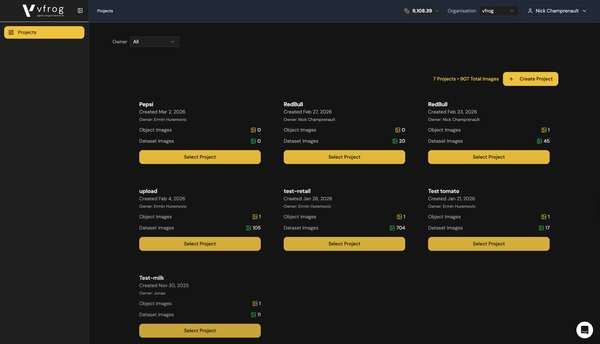

A narrow, well-scoped pilot validates the model on real farm data, in real environmental conditions, before any scale commitment. It also generates the labeled data that makes the next iteration better. Every vfrog enterprise engagement starts with a proof of concept on a single, high-value bottleneck — proven in production before anything expands. Pilots start at $600.

Solve the data problem with synthetic generation

The seasonal data scarcity problem in agriculture is precisely what synthetic data is built for. Our pipeline converts 2D images of crops, produce, or animals into photorealistic 3D renders across varied lighting conditions, growth stages, disease presentations, and environmental contexts.

Drone imagery of a healthy crop under drought stress. A cow's gait pattern at different stages of lameness. A fruit at different maturity levels under different lighting angles. These variations can be generated synthetically — providing the training diversity that would take seasons of field data collection to accumulate naturally.

Edge deployment is the only architecture that makes sense in the field

Our models are built for on-device inference from the ground up. Sub-100ms detection without cloud dependency. Designed for environments where connectivity is intermittent or absent. For precision spraying, livestock monitoring in remote barns, and drone-based edge processing, this isn't an optimization — it's the baseline requirement.

Small, task-specific models outperform generic ones in variable agricultural environments

A generic large vision model trained on millions of object categories is exactly the wrong architecture for agricultural applications. It carries computational overhead that is incompatible with edge hardware, and its accuracy on specific agricultural tasks — lameness detection in a specific breed, disease identification in a specific crop variety — is diluted by the breadth of what it knows.

A model trained specifically on dairy cattle gait patterns, or on the weed species found in a specific geography, or on the defect classes relevant to a specific buyer's grading standard, outperforms generic alternatives on the specific task because every parameter is working on the actual problem.

Drift management built for seasonal reality

In agriculture, model drift isn't a gradual degradation — it's a seasonal event. New crop varieties, new disease strains, new weed populations, new animal cohorts. Our production feedback loop captures edge cases as they appear in operational data, surfaces them for expert review, and incorporates them into the training pipeline before the next season. The model improves continuously rather than degrading silently between manual retraining cycles.

The Opportunity Nobody Is Capturing

The agricultural CV deployments that make industry press — IBM's 10,000-acre precision program, DeLaval's automated dairy scoring, high-automation vertical farms — represent a tiny fraction of the operations where CV would deliver clear ROI.

The dairy farmer with 200 cows who wants lameness detection but not a six-month custom project. The mid-size orchard operation that wants yield estimation but can't justify enterprise software pricing. The grain cooperative that wants post-harvest foreign object detection without a seven-figure installation.

These are the operations the current tools weren't built for. And they represent the majority of global agricultural production.

Computer vision in agriculture doesn't have to start at $100,000 and two seasons. It can start with one use case, one farm, one bottleneck — proven in production before anything scales.

That's what we're building at vfrog.

vfrog is an agentic computer vision platform that lets any developer or enterprise build and deploy task-specific small vision models — no ML expertise required. Enterprise pilots start at $600. Self-serve platform access starts at $49/month.